The first thing to say about the TOP 100 CLG '26 list is that it was hard to make. Five hundred and twenty nominations came in. The community voting round did real work, but it did not pick the list by itself. The judges argued. Some of the cuts were close. We are still arguing internally about a couple of them.

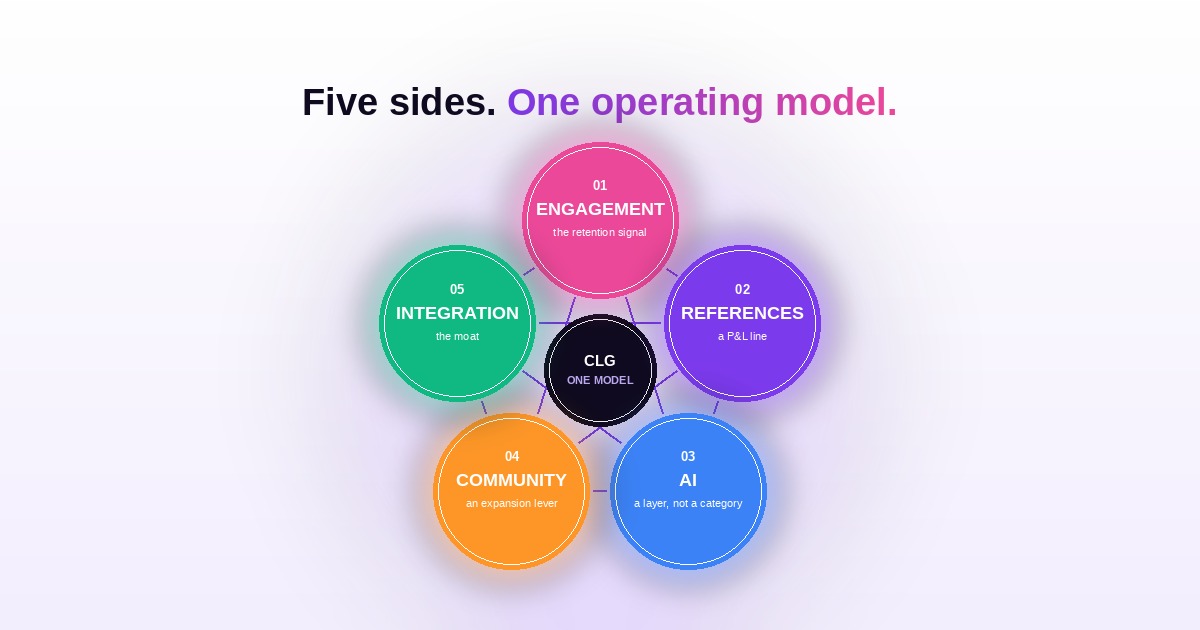

What we want to write about is not who got picked. The cards on the TOP 100 CLG '26 page already tell those stories one by one, and you can read them yourself. What we want to write about is what showed up in the dataset when we stepped back from the cards. Five patterns kept appearing across categories that are supposed to be different from each other (advocacy, references, digital CS, community, expansion) and across companies that look nothing like each other. The fact that they appeared everywhere is what convinced us they are not five trends in five lanes. They are five sides of one operating model, and the leaders on this year's list are the first cohort to be running all five of them at once.

Here they are.

For a long time, customer engagement was a soft metric that lived next to NPS. The people who took it seriously were customer marketers, not CS leaders, and certainly not finance. The TOP 100 list made it a hard metric.

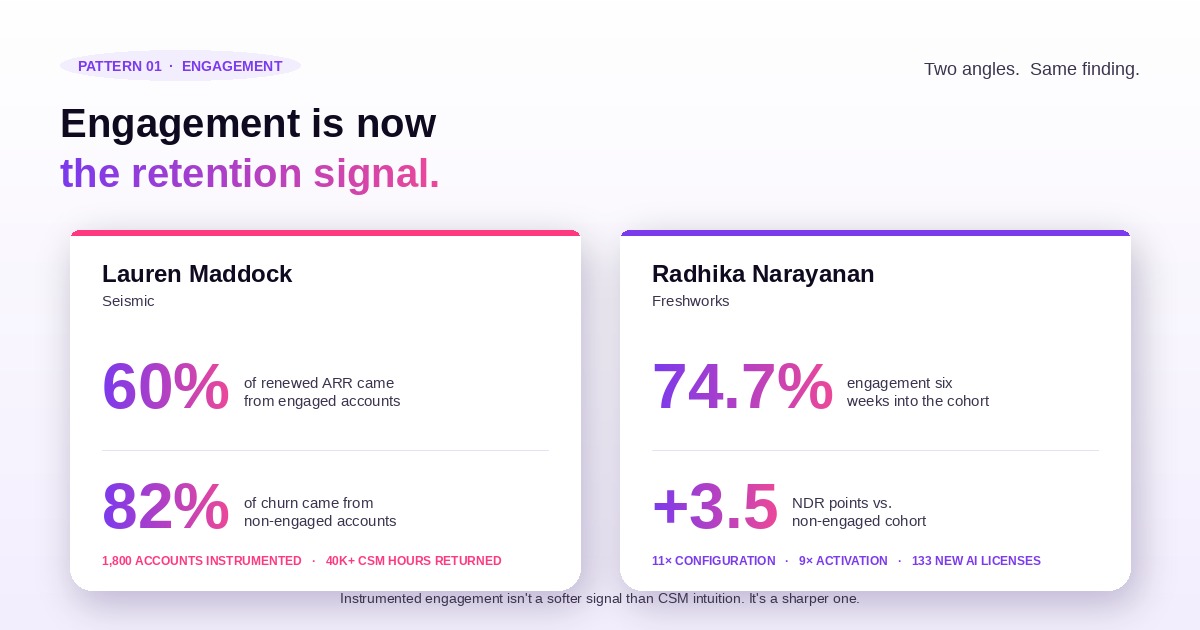

Lauren Maddock at Seismic is the cleanest case. Her team transformed the Seismic community from a support forum into a digital CS engine, with product webinars, practitioner-led Power Plays, peer-driven Brain Dates, and partner sessions, instrumented at the account level across roughly 1,800 accounts. The two numbers that came back are not CS-leadership talking points. They are forecasting inputs. 60% of renewed ARR came from engaged accounts. 82% of churn came from non-engaged accounts. Once those two numbers are sitting on the table, every renewal forecast in the building either uses engagement as an input or admits it is being run with less information than it could be run with. Lauren's team also returned 40,000+ hours to her CSMs by reducing reliance on 1:1 interactions, but the predictive piece is the headline.

Radhika Narayanan at Freshworks made the same case from a different angle. Strong sales momentum was running into low post-purchase activation: under-configured licenses, inconsistent agent activation, plateauing adoption, rising churn risk. Instead of throwing more CSMs at the gap, she built a 21-day onboarding journey for time-to-first-value and a 45-day adoption journey for low-adopter reactivation, with cohort-based governance, entry/suppression rules, and re-entry logic, synchronized across email, in-app nudges, masterclass webinars, certifications, community pathways, and white-glove CSM touches, all governed by a cross-functional Adoption Steering Committee. Six weeks in, the cohort hit 74.7% engagement, configuration moved 11x, activation moved 9x, 133 new AI licenses expanded, and the engaged cohort outperformed the non-engaged cohort by 3.5 NDR points (107.1% versus 103.6%) quarter over quarter. Same finding from a different mechanism: instrumented engagement is not a softer signal than CSM intuition. It is a sharper one.

For most of the last decade, reference programs lived somewhere between "useful" and "underfunded." The TOP 100 closed that gap.

Kara Manfredi at Sage Intacct is the proof point. With a partner she affectionately calls a "Wonder Twin," she embedded customer references into sales and marketing workflows, with operational guardrails strong enough to scale the program without breaking customer trust. The numbers walk her program from "useful" to "23% of all deal outcomes." $5.1M influenced in FY23. $8.6M in FY24. $11.5M in FY25. Another $4.4M YTD in FY26. Reference engagements scaled from 479 to 864 to 1,104 to 1,276 YTD. That is not a support function tucked under marketing ops. That is roughly a quarter of the deal book attributed to a program most companies still staff at one or two people.

Jeff Livingston at ADP made the same point at a different scale. His title is Director of Client Advocacy for MAS and Canada, and the program he runs has 8,500 Ambassadors growing 20% YoY, a 1,950-quote UserEvidence database, a remote video testimonial platform that quadrupled output at half the cost, a top-5% HR podcast 49 episodes deep, and an Executive Client Advisory Board that shapes ADP's product roadmap. The receipts: $55.5M in influenced revenue, 6,200+ client references, 251 reference conversations per month, 1.74-day average turnaround. When a single reference program is contributing $11.5M and a single advocacy community is influencing $55.5M, "reference management" stops being a category at the bottom of the org chart and starts being a P&L line that gets defended like one.

This pattern matters most because it is the one going to keep being wrong about itself for the next twelve months unless we name what it actually is.

The award has a category called "Utilizing AI in Customer Programs," and 15 winners came in inside it. The interesting read is what happened outside the category.

Inside the category, Kristi Faltorusso is the canonical example. She built a custom GPT that ingests call transcripts, listens for competitive signals and value-messaging patterns, scores each deal on competitive risk, and serves CSMs a tailored playbook by competitor and lifecycle stage. Result: an 18% increase in retention for competitive deals inside the critical 180-day renewal window. Sneha Iyer at Observe.AI (#7) is also there, and her contribution is the upstream version: First Mile Intelligence (300+ past deployments turned into reusable context, KPI logic, and data-readiness briefs) and a Value Modeling Engine that maps every scenario, objection, and agent behavior into CFO-credible projections. The outcomes: 40% faster time-to-value, 50% faster CS ramp, ROI models built in 20 minutes instead of 6 to 8 analyst hours, $20M+ influenced revenue, 73% upsell win rate, 80%+ renewal rates on Value-Modeling-backed deals.

Outside the AI category, the same moves are bleeding into every other category on the list. Reference programs are getting AI-augmented orchestration. Advocacy programs are using AI to identify advocates at the right moment in their lifecycle. Digital CS programs are running AI signals into adoption nudges. Member Success programs (Amber Frye at Alloy Labs) are using a custom AI assistant that cuts strategy-session prep time 40% while increasing personalization, on the way to lifting retention from 86% to 96% and growing program participation 70%.

Marco Carrubba at LinkedIn wrote the line that captures what the dataset is actually doing. "AI removes friction, accelerates insight, and personalizes engagement, while human connection builds the trust that turns customers into collaborators, collaborators into advocates, and advocates into growth engines." That is not "AI as a category." That is AI as a layer underneath every category. The TOP 100 are the cohort that started treating it that way.

For a long time, community programs got measured on engagement metrics: visits, posts, replies. The TOP 100 measured them on the line CFOs care about.

Andressa Oliveira at RD Station built Conecta, a B2B customer community that scaled to 10,000+ monthly visits in 2025. The metric that matters is not the visits. It is what happened to the customers who showed up. 3x higher adoption. 50% higher expansion rates among engaged users. She also stood up Prime Conecta, an exclusive community for marketing and sales leaders in Brazil, to deepen relationships with the most strategic accounts. The point of community in 2025 stopped being "brand affinity." It started being "the engaged cohort is the most expansion-ready cohort on the book."

Sonia Starova at Temenos made the same case in a different industry. She deepened relationships with 30%+ of her Ambassador community, hosted 200+ Ambassadors at the annual event, and ran in-depth interviews that produced 20+ success stories and 12 video testimonials. The output was not softer relationships. It was a 350% increase in SaaS and AI case studies, an 81.8% rise in Base Portal ASKs year over year, and a Customer Success Stories page that now ranks among Temenos' top 23 company pages globally, through organic growth alone. Peer-to-peer beats broadcast. The 2026 list is the year that became measurable.

This is the pattern that ties the other four together. The reason it sat at the top of the final list is that you cannot run the other four well without running them connected to each other.

Majhon Phillips, Head of Services and Success, Americas at UpSlide - hjer story is not about a single program. It is about how the programs connect. She designed an integrated lifecycle model that wove CSM-sourced expansion plays, Scale CSM motions, Solutions Spotlight cross-sell campaigns, and an executive community program called "The Slide Show" into a single connected journey. The result: expansion pipeline grew 585% (from $292K to $2M). NRR climbed from 109% to 114%. Churn went from 6% to 2.5%. 75+ strategic product pitches with 52% executive event attendance. The program piloted in the US and is now scaling globally as UpSlide's blueprint for revenue-orchestrating CS.

What separates Majhon's program from a hundred others on the list is not any single tactic. It is that the tactics are not separate from each other. The CSM motion, the scale motion, the cross-sell campaign, and the executive community all run on the same operating model. When one part of the lifecycle moves, the others know about it. Most of the rest of the market is still running them as four programs, with four owners, four roadmaps, four sets of metrics, and the integration handled by spreadsheets if it is handled at all.

If you read the TOP 100 CLG '26 list as a snapshot of what individual leaders did, you see 100 great stories. Useful. Borrow whichever ones fit your team.

If you read it as a dataset, what you see is that customer-led growth has stopped being a function and started being an operating model. The five patterns above are not five separate strategies. They are five sides of the same model, and the gap between the leaders on this year's list and everyone else is not that the leaders are running better individual programs. It is that they are running their programs as one connected system.

The full list (and the 19 consultants who shaped this year's program) is at base.ai/top100-clg-2026. The five patterns are already running somewhere on it. The question worth asking, before the next planning meeting, is how many of them are running on yours.

First edition of the TOP 100 CLG awards. Why we expanded beyond customer marketing — and what unified, AI-augmented Customer-Led Growth looks like now.

.png)

Enterprise SaaS customer journeys break down because data and teams operate in silos. Learn how a unified post-sale system and Customer Intelligence Hub from Ba

.png)

SaaS companies often rely on NPS and survey sentiment, only to be surprised by churn. Learn how Base unifies product telemetry, engagement, and sentiment into a

See how Base helps you build advocacy programs that drive growth.

Book a demo